In the dynamic realm of software development, achieving harmony between individual productivity and team synergy is crucial. Enter Agentic SDLC: a refined Software Development Life Cycle that employs autonomous AI agents to enhance both personal and team workflows. This approach leverages AI to simulate complex systems, boosting developer efficiency and fostering trust through robust frameworks. This article explores Agentic AI's role, offering practical examples and case studies that illustrate its impact. We delve into strategies for mitigating AI hallucinations and latency, providing concrete examples and implementation details. Furthermore, we outline security best practices for AI agents interacting with sensitive codebases, and examine their integration with current development tools and modern architectures like Transformers and Retrieval-Augmented Generation (RAG). By presenting a comprehensive analysis, we aim to empower developers to harness these AI-driven innovations for a more synchronized, efficient, and secure development process.

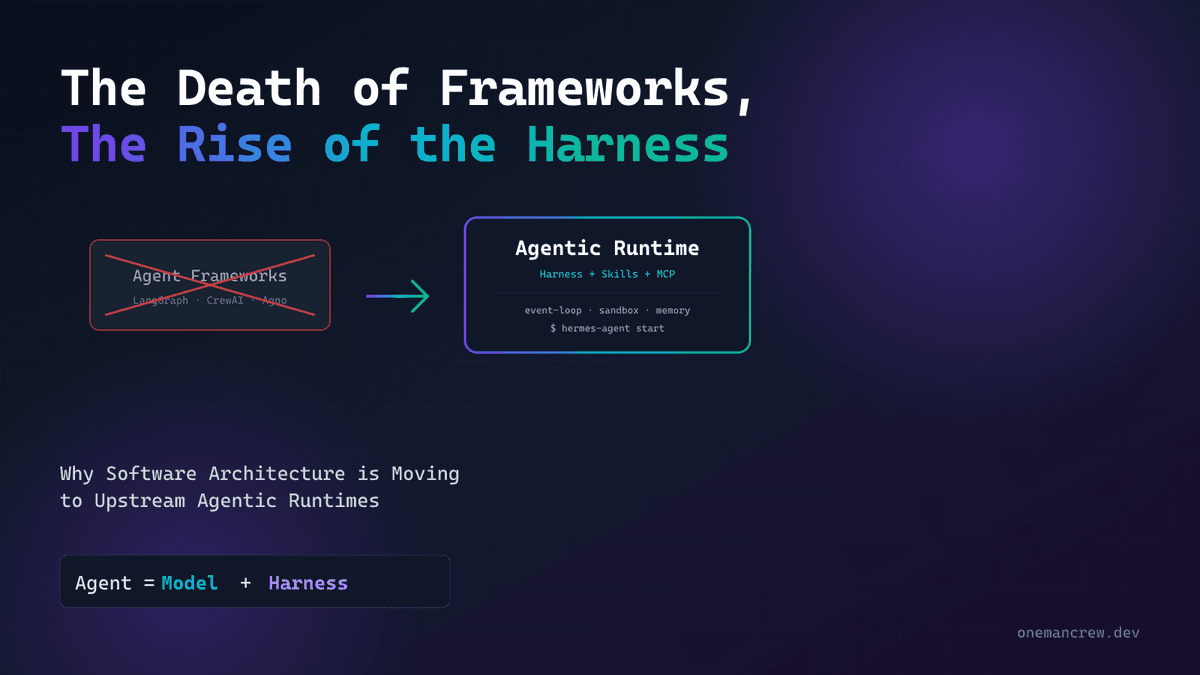

Introduction to Agentic AI in Software Development

Agentic AI in software development involves the use of autonomous software agents to perform tasks traditionally managed by human developers. These agents employ advanced AI technologies, such as Transformers and Large Language Models (LLMs), to understand, generate, and manipulate code, effectively acting as intelligent collaborators. By automating repetitive tasks, optimizing workflows, and enhancing team communication, they significantly boost productivity.

This shift represents a move from isolated development practices to more collaborative workflows. Historically, software development has been an individualistic effort, often conducted in isolation. Agentic AI dissolves these boundaries by autonomously handling tasks like code refactoring, bug detection, and feature implementation. Techniques like Retrieval-Augmented Generation (RAG) allow these agents to dynamically source relevant information, overcoming the context window limitations of LLMs to efficiently manage large codebases.

AI agents rely on technologies such as Abstract Syntax Trees (AST) and semantic analysis to accurately interpret code logic. Unlike traditional static analysis tools, these agents use embeddings to deeply comprehend code semantics, providing nuanced, context-aware suggestions. However, challenges like processing latency and AI hallucinations—where the AI generates plausible but incorrect code—must be addressed. Mitigation strategies include implementing robust feedback loops, integrating human oversight, and using techniques such as confidence scoring to ensure accuracy and reliability.

Security is paramount when AI agents access sensitive codebases. Best practices include employing strict access controls, maintaining detailed audit trails, and using encryption to safeguard intellectual property. Successful integration of AI agents into development environments also requires modifying existing infrastructures, such as configuring CI/CD pipelines to accommodate AI-driven changes. Performance metrics, such as task completion time, accuracy, and reduction in manual review workload, are essential for evaluating AI effectiveness.

Real-world examples illustrate the potential of agentic AI. One tech company integrated AI agents into their workflow, achieving a 30% reduction in bug resolution time and significantly improving team collaboration efficiency. Understanding the capabilities and challenges of agentic AI is crucial for its successful integration, marking a new era in software development dynamics.

import asyncio

from langchain.agents import Agent, Crew

from langchain.models import OpenAI

from langchain.processes import Workflow

from langchain.errors import AgentError

from typing import List

async def main_workflow() -> None:

"""

This function demonstrates orchestrating a team of AI agents using LangChain.

The agents are configured to work collaboratively on a software development task.

"""

try:

# Initialize the AI model

model = OpenAI(api_key="your_openai_api_key")

# Define the agents with specific roles

code_generation_agent = Agent(

model=model,

task="Generate Python code for a feature request"

)

code_review_agent = Agent(

model=model,

task="Review generated code for best practices and potential bugs"

)

# Create a crew with the defined agents

development_crew = Crew(

agents=[code_generation_agent, code_review_agent]

)

# Define the workflow across the team

workflow = Workflow(

crew=development_crew,

process_sequence=["Generate Code", "Review Code"]

)

# Execute the workflow

results = await workflow.execute(input_data="Feature request: Add logging to the user authentication module")

# Process the results

for step, result in zip(workflow.process_sequence, results):

print(f"Step: {step}, Result: {result}")

except AgentError as e:

print(f"An error occurred with the agent: {e}")

except Exception as e:

print(f"An unexpected error occurred: {e}")

# Run the main workflow

asyncio.run(main_workflow())This code example demonstrates how to leverage LangChain to orchestrate a team of AI agents working collaboratively on a software development task. The agents generate and review code, simulating an agentic Software Development Life Cycle (SDLC).

Simulating Complex Systems with AI Agents

Simulating complex systems with AI agents involves leveraging advanced machine learning and sophisticated system management techniques. Imagine deploying AI to simulate environments akin to SimCity, where REST APIs bridge the gap between these virtual worlds. Within these simulations, AI agents manage resources, make decisions, and adapt to changes, delivering valuable insights into system dynamics.

AI models, typically based on Transformer architectures, play a pivotal role in these simulations. These models excel at capturing dependencies across long sequences of actions and events due to their attention mechanisms. However, the limited size of context windows presents challenges, necessitating strategic input segmentation or employing retrieval-augmented generation (RAG) techniques. These methods allow the dynamic integration of relevant context into the model, enhancing decision-making without overwhelming capacity.

Deploying AI agents demands robust integration with REST APIs for real-time interaction and data exchange. This requires orchestrating API calls to ensure agents access the current simulation state while managing latency constraints. REST APIs must handle high-throughput and low-latency demands, often requiring network and server-level optimizations to enhance performance.

AI agents offer profound insights by uncovering inefficiencies in resource allocation, predicting bottlenecks, and suggesting optimizations that may not be immediately apparent to humans. By autonomously handling complex tasks, they free teams from mundane decisions, enabling a focus on strategic objectives.

Despite these advantages, challenges such as decision-making latency, AI hallucinations, and security concerns must be addressed. Mitigation strategies include implementing robust validation mechanisms, fine-tuning models with domain-specific data, and adhering to security best practices like strict API access controls and continuous monitoring.

from crewai import Agent, Task, Crew, Process

from crewai.environments import SimCityEnvironment

from transformers import AutoModelForSeq2SeqLM, AutoTokenizer

import asyncio

import os

# Configure environment variables

API_KEY = os.getenv('SIMCITY_API_KEY')

# Load a transformer model for decision making

model_name = 'facebook/bart-large'

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForSeq2SeqLM.from_pretrained(model_name)

class ResourceManagementAgent(Agent):

def __init__(self, name: str, environment: SimCityEnvironment):

super().__init__(name)

self.environment = environment

async def manage_resources(self):

"""Agent manages resources by making decisions based on the environment state."""

state = await self.environment.get_state()

inputs = tokenizer(state, return_tensors="pt", truncation=True)

outputs = model.generate(**inputs)

decision = tokenizer.decode(outputs[0], skip_special_tokens=True)

await self.environment.apply_decision(decision)

async def main():

# Initialize the simulation environment

environment = SimCityEnvironment(api_key=API_KEY)

agent = ResourceManagementAgent(name="CityManager", environment=environment)

crew = Crew(agents=[agent])

process = Process(name="City Management Process", crew=crew, tasks=[Task(func=agent.manage_resources)])

try:

# Run the simulation

await process.run()

except Exception as e:

print(f"Error during simulation: {e}")

# Run the event loop

if __name__ == "__main__":

asyncio.run(main())This code snippet demonstrates setting up a complex system simulation using CrewAI agents managing a virtual city environment akin to SimCity. It incorporates a transformer model for decision-making and leverages asynchronous programming for efficient task execution.

Building Trustworthy Agentic Systems

Building trustworthy agentic systems requires a multifaceted approach that incorporates transparent design and robust operational frameworks to enhance user trust and system efficiency. Transparency is crucial, beginning with the system architecture, which should adopt clear, modular designs. This often involves microservices architectures, where each service manages a specific task, facilitating auditing and modifications without disrupting the entire system.

Operational frameworks should emphasize control, consent, and accountability. Control can be implemented through fine-grained permissions and role-based access controls, ensuring AI agents function within defined boundaries. Consent involves transparent data governance policies, enabling users to manage data usage—especially vital for models like Transformers that may handle sensitive data.

Accountability is maintained through detailed logging and monitoring, using tools like the ELK stack for log aggregation and Prometheus for real-time metrics. This setup is essential for tracing AI agent actions and decisions, crucial for analyzing outputs from complex models. Implementing RAG (Retrieval-Augmented Generation) frameworks enhances transparency by grounding AI outputs in verifiable data sources, reducing hallucination risks.

In practice, ensuring these principles involves both static and dynamic analysis. Static analysis tools provide pre-deployment insights, while dynamic analysis monitors runtime behavior, identifying security vulnerabilities and performance bottlenecks.

Security best practices include rigorous authentication, encrypted communications, and regular audits. Using OAuth for secure authentication and TLS for encrypted data transmission enhances security. Integrating AI agents with existing development tools through APIs and SDKs facilitates smoother transitions and broader adoption.

import os

import logging

from crewai import Agent, Task, Crew, Process

from typing import List, Dict, Any

# Set up logging

logging.basicConfig(level=logging.INFO)

class MicroserviceAgent(Agent):

def __init__(self, name: str, config: Dict[str, Any]):

super().__init__(name)

self.config = config

async def execute(self, task: Task) -> Dict[str, Any]:

"""

Execute the task and return a result.

The agent can be monitored for performance and reliability.

"""

logging.info(f"Executing task {task.id} with config {self.config}")

# Simulate a task execution

result = await self.perform_task_logic(task)

logging.info(f"Completed task {task.id} with result {result}")

return result

async def perform_task_logic(self, task: Task) -> Dict[str, Any]:

# Placeholder for actual task execution logic

return {"status": "success", "data": task.parameters}

class AgenticSystem:

def __init__(self, agents: List[MicroserviceAgent]):

self.agents = agents

async def execute_process(self, process: Process) -> None:

"""

Execute a series of tasks as part of the process.

Each task is delegated to an appropriate agent.

"""

for task in process.tasks:

agent = self.select_agent(task)

if agent:

await agent.execute(task)

else:

logging.warning(f"No agent available for task {task.id}")

def select_agent(self, task: Task) -> MicroserviceAgent:

"""

Select an appropriate agent for the given task.

This can be based on capabilities, load balancing, etc.

"""

# Simplified selection logic

return self.agents[0] if self.agents else None

if __name__ == "__main__":

# Configuration and initialization

config = {"api_key": os.getenv("API_KEY")}

agent1 = MicroserviceAgent(name="Agent1", config=config)

agentic_system = AgenticSystem(agents=[agent1])

# Define a hypothetical process with tasks

process = Process(tasks=[Task(id=1, parameters={"param1": "value1"})])

# Execute the process

import asyncio

asyncio.run(agentic_system.execute_process(process))This code demonstrates a professional setup for building a trustworthy agentic system using CrewAI. It defines a microservice architecture with agents executing tasks within a process, emphasizing transparency and modular design patterns suitable for senior software engineers.

Enhancing Developer Productivity with AI Coding Tools

In the evolving landscape of software development, AI coding tools are significantly enhancing developer productivity by automating repetitive tasks and simplifying navigation through complex codebases. Powered by transformer-based architectures, these AI tools leverage attention mechanisms to comprehend and generate code, surpassing traditional static analysis capabilities.

AI tools automate mundane tasks such as code formatting, refactoring, and syntax corrections using Abstract Syntax Trees (AST) and semantic analysis. This allows developers to concentrate on complex problem-solving. Transformers, for example, can parse large codebases, providing context-aware suggestions that would be challenging to achieve with regex or simple lexical analysis.

Integrating AI into development environments promotes seamless navigation through complex codebases. Techniques like embeddings and retrieval-augmented generation (RAG) help AI tools deliver relevant code snippets or documentation within the IDE, easing the cognitive load associated with searching large repositories. This shift enables developers to focus on strategic problem-solving, essential in agile environments where rapid iteration and adaptability are key.

Despite their benefits, deploying AI solutions involves challenges. Transformers face limitations due to context window size, affecting their ability to process extensive codebases in one go. Issues like latency and hallucinations—where AI generates incorrect or nonsensical code—pose additional hurdles. Mitigation strategies include implementing validation layers and fine-tuning models to specific codebases, though these require substantial computational resources and expertise.

Security is another critical consideration. Implementing rigorous access controls and audit trails is vital to prevent sensitive information leaks. Practical insights from leading tech firms demonstrate the real-world impact of AI-enhanced practices—for instance, automating code reviews and deployment checks reduced release times by 30%.

import asyncio

from typing import List, Dict, Any

from transformers import pipeline

import os

# Configuration for AI model

AI_MODEL = os.getenv('AI_MODEL', 'bigcode/starcoder')

async def analyze_code_snippet(snippet: str) -> Dict[str, Any]:

"""

Analyze a given code snippet using a transformer-based model to enhance developer productivity.

Args:

snippet (str): The code snippet to be analyzed.

Returns:

Dict[str, Any]: Analysis results including possible optimizations.

"""

code_analysis_pipeline = pipeline('text2text-generation', model=AI_MODEL)

response = code_analysis_pipeline(snippet)

return response

async def process_code_snippets(snippets: List[str]) -> List[Dict[str, Any]]:

"""

Process a list of code snippets concurrently to analyze them.

Args:

snippets (List[str]): List of code snippets to analyze.

Returns:

List[Dict[str, Any]]: List of analysis results for each snippet.

"""

tasks = [analyze_code_snippet(snippet) for snippet in snippets]

results = await asyncio.gather(*tasks)

return results

# Example usage

if __name__ == "__main__":

code_snippets = [

"def add(a, b): return a + b",

"for i in range(10): print(i)"

]

loop = asyncio.get_event_loop()

analysis_results = loop.run_until_complete(process_code_snippets(code_snippets))

for result in analysis_results:

print(result)This code demonstrates the use of transformer-based AI models to analyze and suggest improvements for code snippets asynchronously, enhancing developer productivity by automating mundane code review tasks.

Implementing Agentic AI for Unified Team Dynamics

Integrating agentic AI into team workflows requires meticulous planning and execution. This transition from individual-centric to unified team dynamics leverages AI's ability to autonomously manage and optimize workflows, particularly within the software development lifecycle (SDLC). Agentic AI systems function as proactive agents, significantly enhancing collaboration and efficiency.

One effective strategy is embedding AI agents in CI/CD pipelines. These agents can autonomously manage tasks like code reviews, static analysis, and integration testing. Utilizing Transformer models for code semantics interpretation, AI provides meaningful feedback and suggestions to enhance code quality. Abstract Syntax Trees (AST) and semantic analysis allow these agents to deeply understand code structures, identifying issues that might elude human reviewers.

Agentic AI can transform personal workflows into cohesive team dynamics using Retrieval-Augmented Generation (RAG) for real-time data retrieval, enhancing decision-making with current information. Fine-tuning AI models on the team's codebase and domain-specific data improves accuracy and relevance, integrating AI seamlessly into the development process.

Challenges include latency and computational overhead, affecting AI responsiveness. To mitigate AI hallucinations or incorrect suggestions, robust validation mechanisms are crucial. Implementing feedback loops where human developers review AI suggestions and provide corrections helps refine the model.

Security is paramount when integrating AI into workflows involving sensitive code and data. Adhere to data protection standards and employ secure access controls to mitigate risks. Implement encryption for data in transit and at rest, and ensure stringent access controls for authorized personnel only.

import os

import asyncio

from crewai import Crew, Agent, Task

from crewai.integrations import GitHubIntegration

from transformers import CodeLlamaTokenizer, CodeLlamaModel

# Configuration for environment variables

GITHUB_TOKEN = os.getenv('GITHUB_TOKEN')

REPO_NAME = 'team/repo'

# Initialize Crew and integrate with GitHub for CI/CD

crew = Crew(name='TeamWorkflowAI')

github_integration = GitHubIntegration(token=GITHUB_TOKEN, repository=REPO_NAME)

crew.add_integration(github_integration)

# Initialize the CodeLlama model for code analysis

model_name = 'codellama-base'

code_tokenizer = CodeLlamaTokenizer.from_pretrained(model_name)

code_model = CodeLlamaModel.from_pretrained(model_name)

async def review_code(task: Task) -> None:

"""

Perform code review using AI on the specified task.

"""

try:

# Retrieve the latest pull request for code analysis

pull_request = await github_integration.get_latest_pull_request()

code_diff = pull_request.diff

# Tokenize and analyze the code changes

inputs = code_tokenizer(code_diff, return_tensors='pt')

outputs = code_model(**inputs)

# Autonomously conduct a code review process

review_comments = outputs.analyze_code_diff()

await github_integration.comment_on_pull_request(pull_request.id, review_comments)

task.complete('Code review completed successfully.')

except Exception as e:

task.fail(f'Error during code review: {str(e)}')

# Create an AI agent to handle code reviews

agent = Agent(name='CodeReviewer', function=review_code)

crew.add_agent(agent)

# Run the Crew's workflow process

async def main() -> None:

await crew.run()

# Execute the main process asynchronously

if __name__ == '__main__':

asyncio.run(main())This code integrates an AI-powered agent within a team's CI/CD pipeline using CrewAI and GitHub, leveraging CodeLlama for autonomous code reviews.

Conclusion

The transition from solo development to team synergy is enhanced by the Agentic SDLC, a methodology that employs AI agents to simulate and manage complex systems. To fully leverage this, teams should focus on building transparent, accountable systems and incorporating AI tools for efficient development. Exploring case studies, such as AI-driven project management in software development, provides valuable insights. For instance, AI agents can optimize resource allocation or improve sprint planning, demonstrating real-world applications. Implementing robust security practices, like access controls and audit trails, is crucial for AI agents handling sensitive code. Integrating AI with existing CI/CD tools, using modern architectures like Transformers and Retrieval-Augmented Generation (RAG), and understanding trade-offs can push innovation boundaries while maintaining ethical standards.

📂 Source Code

All code examples from this article are available on GitHub: OneManCrew/harnessing-agentic-sdlc